Every AI-Assisted Repository Needs a HUMAN.md

Being able to write and ship code used to be a proxy for expertise.

But when anyone can vibe-code a repository into existence, shipping code is no longer a strong signal of expertise.

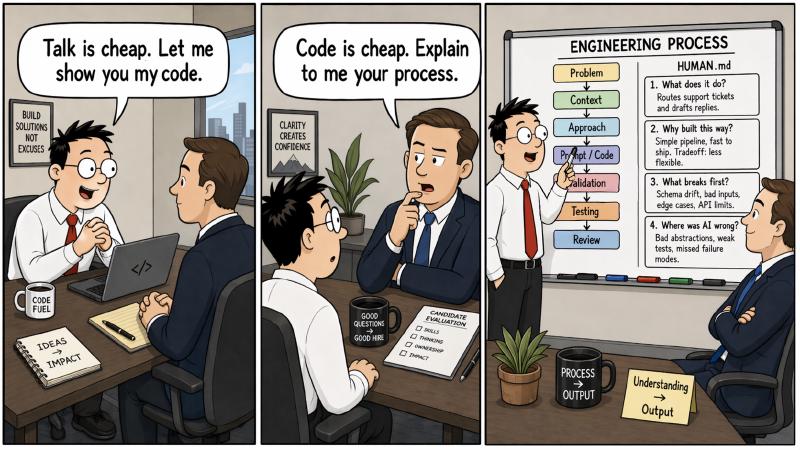

This becomes a problem when you try to assess the quality of work and candidate experience in interviews: you are looking at a lot of code that looks finished, but it tells you almost nothing about whether the person who shipped it actually understands the system.

To find out if a candidate actually worked on the system that they put on their resume or their GitHub account, we typically ask a series of questions about their past projects. We try to understand their reasoning, the trade-offs that they made, how well they understand how their system breaks, and if they understand the users they built it for.

To separate human judgment from AI slop, I think every AI-assisted repository should have a HUMAN.md file that documents these human thoughts and decisions that were made during the development process.

Intention

A HUMAN.md is not a README extension. It is meant to be an explanation artifact that shows there is a human behind the repo. Its intention is:

- Make human understanding visible

We are already seeing people ship systems they cannot clearly explain. A HUMAN.md makes the builder’s understanding visible at the repository level instead of leaving it buried in prompts, chat logs, or hand-waving.

- Documents human judgment.

It is judgment under constraints. It shows up in what you chose not to build, which trade-offs you accepted, which abstractions you rejected, and where you overruled the AI.

- It defines the blast radius

AI is good at producing output and often weak at reasoning about system fragility. A serious HUMAN.md states the dependencies, trust boundaries, failure modes, and the first things that break when environments or specifications change. Things that are not easy for LLMs to reason about.

- It becomes a credibility signal

In an era of abundant generated code, the scarce thing is not output. It is demonstrated comprehension. A HUMAN.md shows that the repo contains more than generated artifacts. It shows that a human can account for the system.

The Four Questions

To make it more concrete, a good HUMAN.md should answer these four questions in depth and detail:

What does this system actually do?

Why was it built this way?

What would break first?

Where was the AI wrong, and what did the human have to correct?

Where it fits

AGENT.md explains to the agent how to navigate the project. README.md explains how to use the software. HUMAN.md explains whether the builder actually understands it.

I like to think of it as an interview transcript.

And if you want to test whether HUMAN.md was generated by an AI, read it and point your AI at it and try to find contradictions in the repo.